Model-Agnostic Orchestration Is the New AI Stack

9 min read

The next durable advantage in AI systems will not come from betting everything on a single frontier model. It will come from building orchestration layers that can route work across many models, tools, memory systems, and execution environments.

A single model can be impressive. A routed system can be operational.

That sounds abstract until you look at what modern AI work actually looks like in practice.

Over the last week, my own workflow has moved across product development, sales-signal extraction, recruiting automation, AI service content, local model testing, usage analytics, search configuration, long-context memory, and agent infrastructure. Some tasks required deep reasoning over a codebase. Some required fast classification. Some required cheap summarization. Some needed private local inference. Some needed a stronger remote model. Some needed search. Some needed passive memory.

No single model is the best choice for all of that.

This is why model-agnostic orchestration is becoming a design goal. The question is no longer, “Which model should we use?” The better question is:

Which model, tool, and memory layer should own this step of the workflow?

From Model Selection to Workload Routing

The first generation of AI adoption was model-centric. Teams picked a vendor, wrapped a chat interface around it, and hoped that better models would solve more of the product surface over time.

That worked when the task was simple: generate copy, answer questions, summarize a document, draft an email.

But agentic systems are different. They run for longer. They accumulate context. They call tools. They make decisions across messy business systems. They touch customer data, internal documents, websites, spreadsheets, CRMs, chat threads, codebases, and local files.

Once that happens, the model becomes only one component of a larger system.

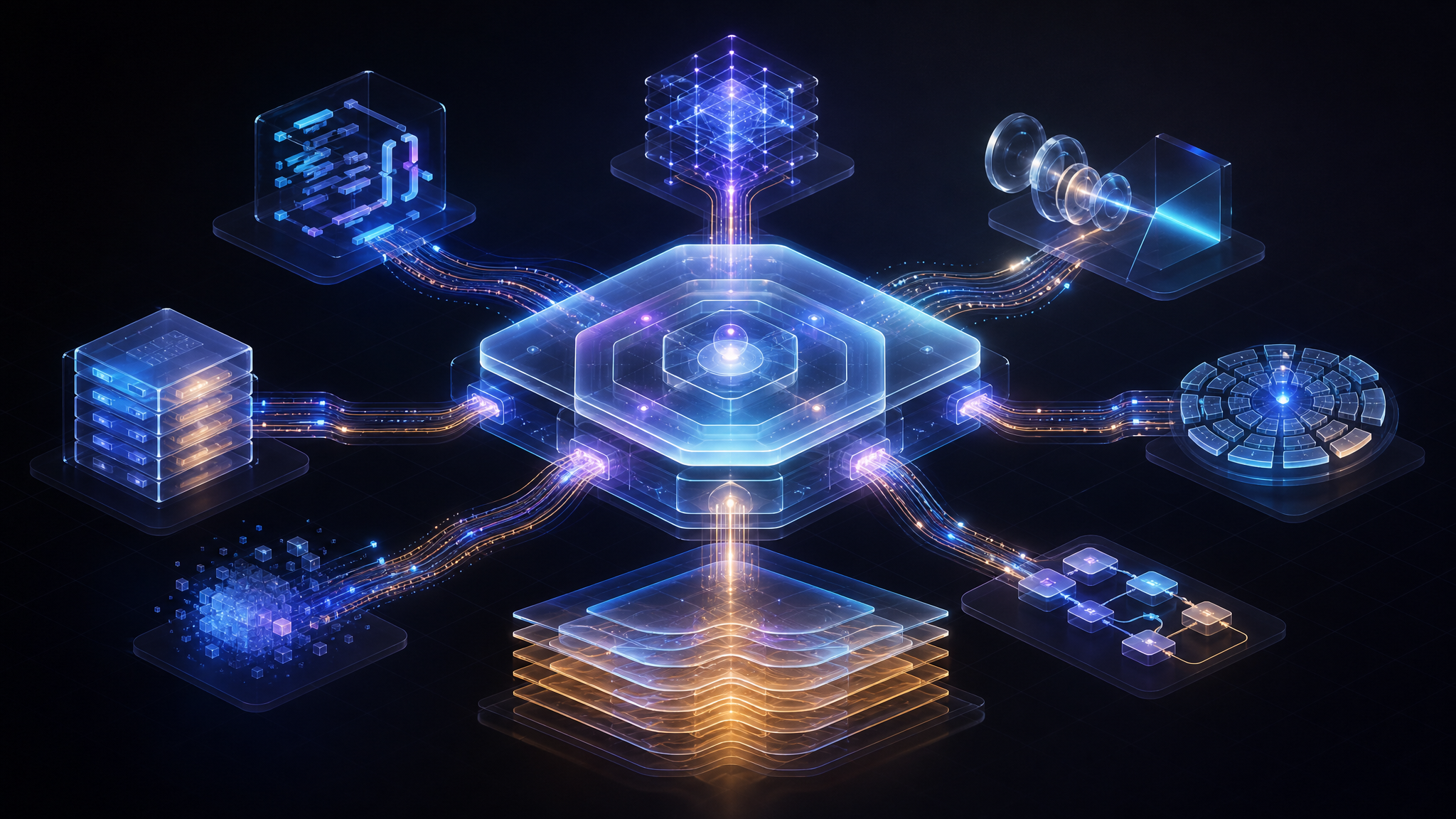

A practical AI stack needs at least four layers:

- The orchestration layer — decides what should happen next, which tool should run, which model should be used, and what context is allowed into the step.

- The model layer — includes frontier reasoning models, coding models, open-weight models, local models, small classifiers, long-context models, and fallback models.

- The memory layer — stores durable context, compressed summaries, user preferences, activity history, decisions, and unresolved tasks.

- The telemetry layer — tracks cost, latency, failures, quality, retries, and model-specific behavior.

In this architecture, models are swappable. The harness is the control plane.

What the Last Week Made Obvious

A normal workweek is a better test of AI architecture than a benchmark leaderboard.

In one thread, I was refining a sales-signal product: improving lead classification, prioritizing commercial opportunities, integrating project metadata, tuning relevance scores, and separating useful buying signals from operational noise. That kind of system needs extraction, classification, enrichment, ranking, deduplication, and user-facing explanation. It is not one prompt. It is a pipeline.

In another thread, I was working on AI recruiting flows: questionnaires, candidate review surfaces, and profile-level evaluation. This requires a different mix of capabilities: structured intake, fair comparison, document parsing, scoring, and human-readable summaries.

In another, I was developing content and service pages around AI automation, AI training, inference server deployment, and split-brain AI architectures. That work benefits from a strong writing model, but also from keyword research, technical accuracy checks, competitive positioning, and source synthesis.

In parallel, I was tuning agent infrastructure: testing local/open models, checking token costs through observability data, configuring tool calling, replacing incompatible search plumbing, and repairing the passive memory path so recent computer activity could flow back into the agent context.

These are not separate categories of work. They are all the same pattern: route the right kind of cognition to the right place.

The Split-Brain Pattern

One of the most useful patterns is split-brain configuration: do not use the same model for every cognitive operation.

A long-running agent might need a very strong model for planning, code edits, or final judgment. But that does not mean every background step deserves the same model.

Compaction is the clearest example.

Agents accumulate context quickly. A useful assistant may carry dozens or hundreds of turns of history, tool results, decisions, names, dates, links, prices, and unresolved tasks. If all of that context is repeatedly sent into the most expensive model, the system becomes slow and expensive. If it is aggressively truncated, the system becomes forgetful.

So compaction becomes a first-class workload.

A compaction model does not need to be the best reasoning model in the world. It needs to be reliable, inexpensive enough to run often, and good at preserving operational details. It should keep decisions, constraints, identifiers, names, dates, pending tasks, and failure modes. It should remove noise without destroying continuity.

That is a different model-selection problem than code generation or strategic planning.

In practice, a cost-efficient long-context model such as GLM-5.1 can be valuable for this kind of fallback and memory-compression work, while stronger coding or reasoning models remain reserved for high-leverage tasks.

The best model for the job is not always the smartest model. Sometimes it is the model that can do the boring work reliably, cheaply, and often.

Local Models Have a Different Job

Local and open models are not just cheaper versions of frontier APIs. They unlock a different operating mode.

A local Qwen-style model behind a vLLM or OpenAI-compatible endpoint can be used for high-volume experimentation, private workflows, tool-calling tests, and iterative agent loops where cost or data sensitivity would otherwise become a bottleneck.

That does not mean the local model has to beat the best hosted model on every benchmark. It only has to be good enough for the part of the pipeline it owns.

For example:

- Local/open models can classify, extract, pre-rank, or draft.

- Stronger hosted models can handle architecture, ambiguous reasoning, and final review.

- Long-context cost-efficient models can compact, summarize, and provide fallbacks.

- Small fast models can filter noise before expensive calls happen.

- Search and retrieval tools can ground the workflow before any model is asked to reason.

The value is not in replacing one model with another. The value is in making model choice programmable.

Memory Is Infrastructure, Not a Feature

The most underrated part of agent orchestration is memory.

If an agent cannot remember what happened yesterday, it will waste tokens rediscovering context. If it cannot distinguish durable facts from transient noise, it will become confidently wrong. If memory depends on a fragile transport path, the system will silently degrade.

That is why passive memory matters.

A useful memory layer watches activity across the work environment, captures relevant context, and makes it queryable later. It can turn a week of scattered work — chats, browser sessions, code edits, product screens, usage dashboards, client threads, and research — into a coherent picture of what has actually been happening.

But memory has to be engineered like infrastructure:

- The transport path must be monitored.

- Failed syncs should not overwrite good context with empty context.

- The system should recover automatically when tunnels or services restart.

- Queries should retrieve recent context without requiring the user to manually reconstruct the week.

- The agent should know when memory is unavailable instead of pretending.

Without this, “AI memory” is just another unreliable cache. With it, memory becomes the substrate that lets orchestration improve over time.

The Harness Owns the Workflow

The model should not own the workflow. The harness should.

The harness decides:

- Which model gets the task.

- Which tools are available.

- Which context enters the prompt.

- Which memories are retrieved.

- Which steps need human approval.

- Which outputs should be compacted.

- Which failures trigger fallbacks.

- Which telemetry should be recorded.

This is the difference between using AI and operating an AI system.

A model call is a moment of intelligence. An orchestration layer is an operating system for intelligence.

Telemetry Makes Routing Possible

Model-agnostic orchestration only works if routing decisions are observable.

You need to know which model is expensive, which model is slow, which model fails at tool calling, which model handles long context well, and which model produces outputs that require heavy correction.

That is why usage analytics and trace data matter. Checking token usage, comparing provider pricing, watching failed tool calls, and validating search-provider behavior are not administrative chores. They are how the routing layer gets smarter.

The stack should answer questions like:

- Which tasks are consuming the most tokens?

- Which model is overqualified for the job?

- Which cheap model is good enough for preprocessing?

- Which fallback actually recovers from failure?

- Which tool integration is breaking the agent loop?

- Which tasks should move local for privacy or cost?

Without telemetry, “use multiple models” becomes random. With telemetry, it becomes engineering.

A Practical Routing Framework

A useful model-agnostic system can start with a simple routing framework:

1. Use the strongest model for irreversible decisions

Architecture changes, codebase-wide edits, strategy, final copy, high-stakes client communication, and ambiguous reasoning deserve the best available model.

2. Use local/open models for volume and privacy

Extraction, tagging, drafting, internal iteration, experimentation, and sensitive workflows are often better served by local or open-weight models behind a compatible endpoint.

3. Use specialized models for memory and compaction

Compaction, summarization, and long-context continuity are not side tasks. They are specialized workloads. Route them intentionally.

4. Use small models before large models

Filter noise first. Classify cheaply. Retrieve before reasoning. Do not pay a premium model to read irrelevant context.

5. Keep the interface model-neutral

Expose models through common interfaces where possible. Keep prompts, tool schemas, and evaluation criteria portable. The more model-specific the workflow becomes, the harder it is to improve later.

6. Design for failure

Every model and tool will fail. Search providers will change behavior. Local servers will go down. Tunnels will break. APIs will rate-limit. A good orchestration layer assumes this and has fallbacks.

The routing layer is not a convenience feature. It is the part of the system that makes every other part replaceable.

The Real Goal: Cognitive Load Balancing

The deeper idea behind model-agnostic orchestration is cognitive load balancing.

Every knowledge-work system has many cognitive operations: reading, classifying, extracting, remembering, planning, writing, checking, coding, deciding, escalating. Humans do not use the same level of attention for all of them. AI systems should not use the same model for all of them either.

The future AI stack will look less like a chatbot and more like a distributed cognitive system:

- Memory captures what happened.

- Retrieval brings back what matters.

- Small models filter and structure.

- Local models iterate privately and cheaply.

- Long-context models preserve continuity.

- Frontier models handle high-stakes reasoning.

- The harness routes the work.

- Telemetry improves the routing over time.

That is the shift.

The winning architecture is not “one model to rule them all.”

It is an orchestration layer that treats models as interchangeable workers, each with a cost profile, latency profile, context window, privacy boundary, and skill profile.

Conclusion

Model-agnostic orchestration is becoming the default architecture for serious AI systems because real work is heterogeneous.

Some tasks need power. Some need memory. Some need privacy. Some need speed. Some need cheap repetition. Some need tool access. Some need human review.

A single model can be impressive. But a routed system can be operational.

The next wave of AI infrastructure will not be defined by which frontier API a company picked. It will be defined by whether the company built a harness that can route work across models, memory, tools, and telemetry without locking the workflow to any one provider.

That is where the leverage is: not in choosing the smartest model once, but in designing a system that can keep choosing the right model for every step.